symebcovmf_overlap_backfit

Annie Xie

2025-05-15

Last updated: 2025-06-05

Checks: 7 0

Knit directory:

symmetric_covariance_decomposition/

This reproducible R Markdown analysis was created with workflowr (version 1.7.1). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20250408) was run prior to running

the code in the R Markdown file. Setting a seed ensures that any results

that rely on randomness, e.g. subsampling or permutations, are

reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great job! Using relative paths to the files within your workflowr project makes it easier to run your code on other machines.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility.

The results in this page were generated with repository version 1e1f127. See the Past versions tab to see a history of the changes made to the R Markdown and HTML files.

Note that you need to be careful to ensure that all relevant files for

the analysis have been committed to Git prior to generating the results

(you can use wflow_publish or

wflow_git_commit). workflowr only checks the R Markdown

file, but you know if there are other scripts or data files that it

depends on. Below is the status of the Git repository when the results

were generated:

Ignored files:

Ignored: .DS_Store

Ignored: .Rhistory

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the repository in which changes were

made to the R Markdown

(analysis/symebcovmf_overlap_backfit.Rmd) and HTML

(docs/symebcovmf_overlap_backfit.html) files. If you’ve

configured a remote Git repository (see ?wflow_git_remote),

click on the hyperlinks in the table below to view the files as they

were in that past version.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | 1e1f127 | Annie Xie | 2025-06-05 | Add analysis of backfit in overlapping setting |

Introduction

In this example, we test out symEBcovMF with backfit on overlapping (but not necessarily hierarchical)-structured data.

Motivation

I am interested in testing whether backfitting helps symEBcovMF in

the overlapping setting (particularly in the setting where we set

Kmax to be the true number of factors). Our high level goal

is to develop a method that does well in both the tree setting and the

overlapping setting.

Packages and Functions

library(ebnm)

library(pheatmap)

library(ggplot2)

library(lpSolve)source('code/symebcovmf_functions.R')

source('code/visualization_functions.R')compute_crossprod_similarity <- function(est, truth){

K_est <- ncol(est)

K_truth <- ncol(truth)

n <- nrow(est)

#if estimates don't have same number of columns, try padding the estimate with zeros and make cosine similarity zero

if (K_est < K_truth){

est <- cbind(est, matrix(rep(0, n*(K_truth-K_est)), nrow = n))

}

if (K_est > K_truth){

truth <- cbind(truth, matrix(rep(0, n*(K_est - K_truth)), nrow = n))

}

#normalize est and truth

norms_est <- apply(est, 2, function(x){sqrt(sum(x^2))})

norms_est[norms_est == 0] <- Inf

norms_truth <- apply(truth, 2, function(x){sqrt(sum(x^2))})

norms_truth[norms_truth == 0] <- Inf

est_normalized <- t(t(est)/norms_est)

truth_normalized <- t(t(truth)/norms_truth)

#compute matrix of cosine similarities

cosine_sim_matrix <- abs(crossprod(est_normalized, truth_normalized))

assignment_problem <- lp.assign(t(cosine_sim_matrix), direction = "max")

return((1/K_truth)*assignment_problem$objval)

}permute_L <- function(est, truth){

K_est <- ncol(est)

K_truth <- ncol(truth)

n <- nrow(est)

#if estimates don't have same number of columns, try padding the estimate with zeros and make cosine similarity zero

if (K_est < K_truth){

est <- cbind(est, matrix(rep(0, n*(K_truth-K_est)), nrow = n))

}

if (K_est > K_truth){

truth <- cbind(truth, matrix(rep(0, n*(K_est - K_truth)), nrow = n))

}

#normalize est and truth

norms_est <- apply(est, 2, function(x){sqrt(sum(x^2))})

norms_est[norms_est == 0] <- Inf

norms_truth <- apply(truth, 2, function(x){sqrt(sum(x^2))})

norms_truth[norms_truth == 0] <- Inf

est_normalized <- t(t(est)/norms_est)

truth_normalized <- t(t(truth)/norms_truth)

#compute matrix of cosine similarities

cosine_sim_matrix <- abs(crossprod(est_normalized, truth_normalized))

assignment_problem <- lp.assign(t(cosine_sim_matrix), direction = "max")

perm <- apply(assignment_problem$solution, 1, which.max)

return(est[,perm])

}Backfit Function

optimize_factor <- function(R, ebnm_fn, maxiter, tol, v_init, lambda_k, R2k, n, KL){

R2 <- R2k - lambda_k^2

resid_s2 <- estimate_resid_s2(n = n, R2 = R2)

rank_one_KL <- 0

curr_elbo <- -Inf

obj_diff <- Inf

fitted_g_k <- NULL

iter <- 1

vec_elbo_full <- NULL

v <- v_init

while((iter <= maxiter) && (obj_diff > tol)){

# update l; power iteration step

v.old <- v

x <- R %*% v

e <- ebnm_fn(x = x, s = sqrt(resid_s2), g_init = fitted_g_k)

scaling_factor <- sqrt(sum(e$posterior$mean^2) + sum(e$posterior$sd^2))

if (scaling_factor == 0){ # check if scaling factor is zero

scaling_factor <- Inf

v <- e$posterior$mean/scaling_factor

print('Warning: scaling factor is zero')

break

}

v <- e$posterior$mean/scaling_factor

# update lambda and R2

lambda_k.old <- lambda_k

lambda_k <- max(as.numeric(t(v) %*% R %*% v), 0)

R2 <- R2k - lambda_k^2

#store estimate for g

fitted_g_k.old <- fitted_g_k

fitted_g_k <- e$fitted_g

# store KL

rank_one_KL.old <- rank_one_KL

rank_one_KL <- as.numeric(e$log_likelihood) +

- normal_means_loglik(x, sqrt(resid_s2), e$posterior$mean, e$posterior$mean^2 + e$posterior$sd^2)

# update resid_s2

resid_s2.old <- resid_s2

resid_s2 <- estimate_resid_s2(n = n, R2 = R2) # this goes negative?????

# check convergence - maybe change to rank-one obj function

curr_elbo.old <- curr_elbo

curr_elbo <- compute_elbo(resid_s2 = resid_s2,

n = n,

KL = c(KL, rank_one_KL),

R2 = R2)

if (iter > 1){

obj_diff <- curr_elbo - curr_elbo.old

}

if (obj_diff < 0){ # check if convergence_val < 0

v <- v.old

resid_s2 <- resid_s2.old

rank_one_KL <- rank_one_KL.old

lambda_k <- lambda_k.old

curr_elbo <- curr_elbo.old

fitted_g_k <- fitted_g_k.old

print(paste('elbo decreased by', abs(obj_diff)))

break

}

vec_elbo_full <- c(vec_elbo_full, curr_elbo)

iter <- iter + 1

}

return(list(v = v, lambda_k = lambda_k, resid_s2 = resid_s2, curr_elbo = curr_elbo, vec_elbo_full = vec_elbo_full, fitted_g_k = fitted_g_k, rank_one_KL = rank_one_KL))

}#nullcheck function

nullcheck_factors <- function(sym_ebcovmf_obj, L2_tol = 10^(-8)){

null_lambda_idx <- which(sym_ebcovmf_obj$lambda == 0)

factor_L2_norms <- apply(sym_ebcovmf_obj$L_pm, 2, function(v){sqrt(sum(v^2))})

null_factor_idx <- which(factor_L2_norms < L2_tol)

null_idx <- unique(c(null_lambda_idx, null_factor_idx))

keep_idx <- setdiff(c(1:length(sym_ebcovmf_obj$lambda)), null_idx)

if (length(keep_idx) < length(sym_ebcovmf_obj$lambda)){

#remove factors

sym_ebcovmf_obj$L_pm <- sym_ebcovmf_obj$L_pm[,keep_idx]

sym_ebcovmf_obj$lambda <- sym_ebcovmf_obj$lambda[keep_idx]

sym_ebcovmf_obj$KL <- sym_ebcovmf_obj$KL[keep_idx]

sym_ebcovmf_obj$fitted_gs <- sym_ebcovmf_obj$fitted_gs[keep_idx]

}

#shouldn't need to recompute objective function or other things

return(sym_ebcovmf_obj)

}sym_ebcovmf_backfit <- function(S, sym_ebcovmf_obj, ebnm_fn, backfit_maxiter = 100, backfit_tol = 10^(-8), optim_maxiter= 500, optim_tol = 10^(-8)){

K <- length(sym_ebcovmf_obj$lambda)

iter <- 1

obj_diff <- Inf

sym_ebcovmf_obj$backfit_vec_elbo_full <- NULL

# refit lambda

sym_ebcovmf_obj <- refit_lambda(S, sym_ebcovmf_obj, maxiter = 25)

while((iter <= backfit_maxiter) && (obj_diff > backfit_tol)){

#print(iter)

obj_old <- sym_ebcovmf_obj$elbo

# loop through each factor

for (k in 1:K){

#print(k)

# compute residual matrix

R <- S - tcrossprod(sym_ebcovmf_obj$L_pm[,-k] %*% diag(sqrt(sym_ebcovmf_obj$lambda[-k]), ncol = (K-1)))

R2k <- compute_R2(S, sym_ebcovmf_obj$L_pm[,-k], sym_ebcovmf_obj$lambda[-k], (K-1)) #this is right but I have one instance where the values don't match what I expect

# optimize factor

factor_proposed <- optimize_factor(R, ebnm_fn, optim_maxiter, optim_tol, sym_ebcovmf_obj$L_pm[,k], sym_ebcovmf_obj$lambda[k], R2k, sym_ebcovmf_obj$n, sym_ebcovmf_obj$KL[-k])

# update object

sym_ebcovmf_obj$L_pm[,k] <- factor_proposed$v

sym_ebcovmf_obj$KL[k] <- factor_proposed$rank_one_KL

sym_ebcovmf_obj$lambda[k] <- factor_proposed$lambda_k

sym_ebcovmf_obj$resid_s2 <- factor_proposed$resid_s2

sym_ebcovmf_obj$fitted_gs[[k]] <- factor_proposed$fitted_g_k

sym_ebcovmf_obj$elbo <- factor_proposed$curr_elbo

sym_ebcovmf_obj$backfit_vec_elbo_full <- c(sym_ebcovmf_obj$backfit_vec_elbo_full, factor_proposed$vec_elbo_full)

#print(sym_ebcovmf_obj$elbo)

sym_ebcovmf_obj <- refit_lambda(S, sym_ebcovmf_obj) # add refitting step?

#print(sym_ebcovmf_obj$elbo)

}

iter <- iter + 1

obj_diff <- abs(sym_ebcovmf_obj$elbo - obj_old)

# need to add check if it is negative?

}

# nullcheck

sym_ebcovmf_obj <- nullcheck_factors(sym_ebcovmf_obj)

return(sym_ebcovmf_obj)

}Data Generation

# args is a list containing n, p, k, indiv_sd, pi1, and seed

sim_binary_loadings_data <- function(args) {

set.seed(args$seed)

FF <- matrix(rnorm(args$k * args$p, sd = args$group_sd), ncol = args$k)

if (args$constrain_F) {

FF_svd <- svd(FF)

FF <- FF_svd$u

FF <- t(t(FF) * rep(args$group_sd, args$k) * sqrt(p))

}

LL <- matrix(rbinom(args$n*args$k, 1, args$pi1), nrow = args$n, ncol = args$k)

E <- matrix(rnorm(args$n * args$p, sd = args$indiv_sd), nrow = args$n)

Y <- LL %*% t(FF) + E

YYt <- (1/args$p)*tcrossprod(Y)

return(list(Y = Y, YYt = YYt, LL = LL, FF = FF, K = ncol(LL)))

}n <- 100

p <- 1000

k <- 10

pi1 <- 0.1

indiv_sd <- 1

group_sd <- 1

seed <- 1

sim_args = list(n = n, p = p, k = k, pi1 = pi1, indiv_sd = indiv_sd, group_sd = group_sd, seed = seed, constrain_F = FALSE)

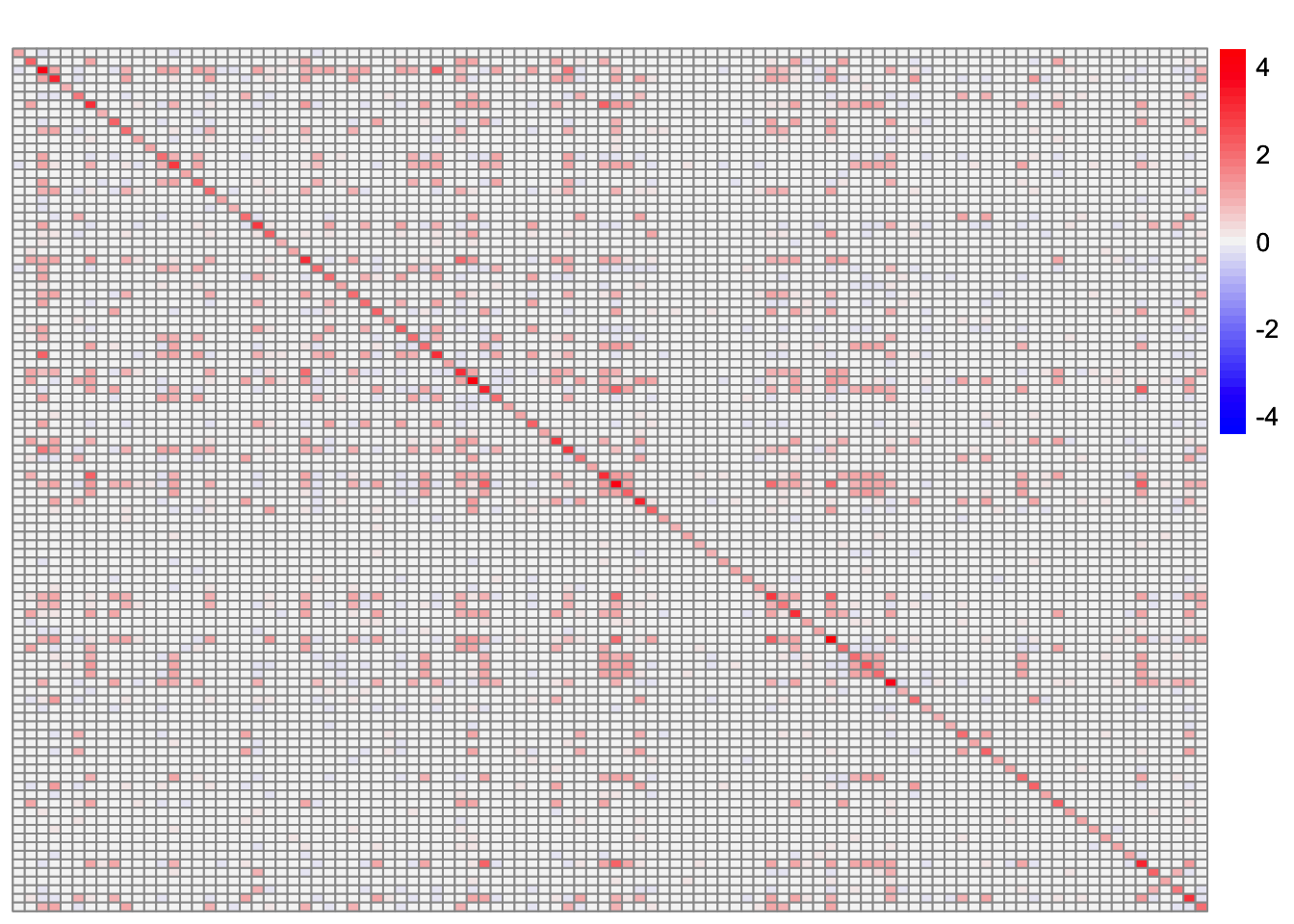

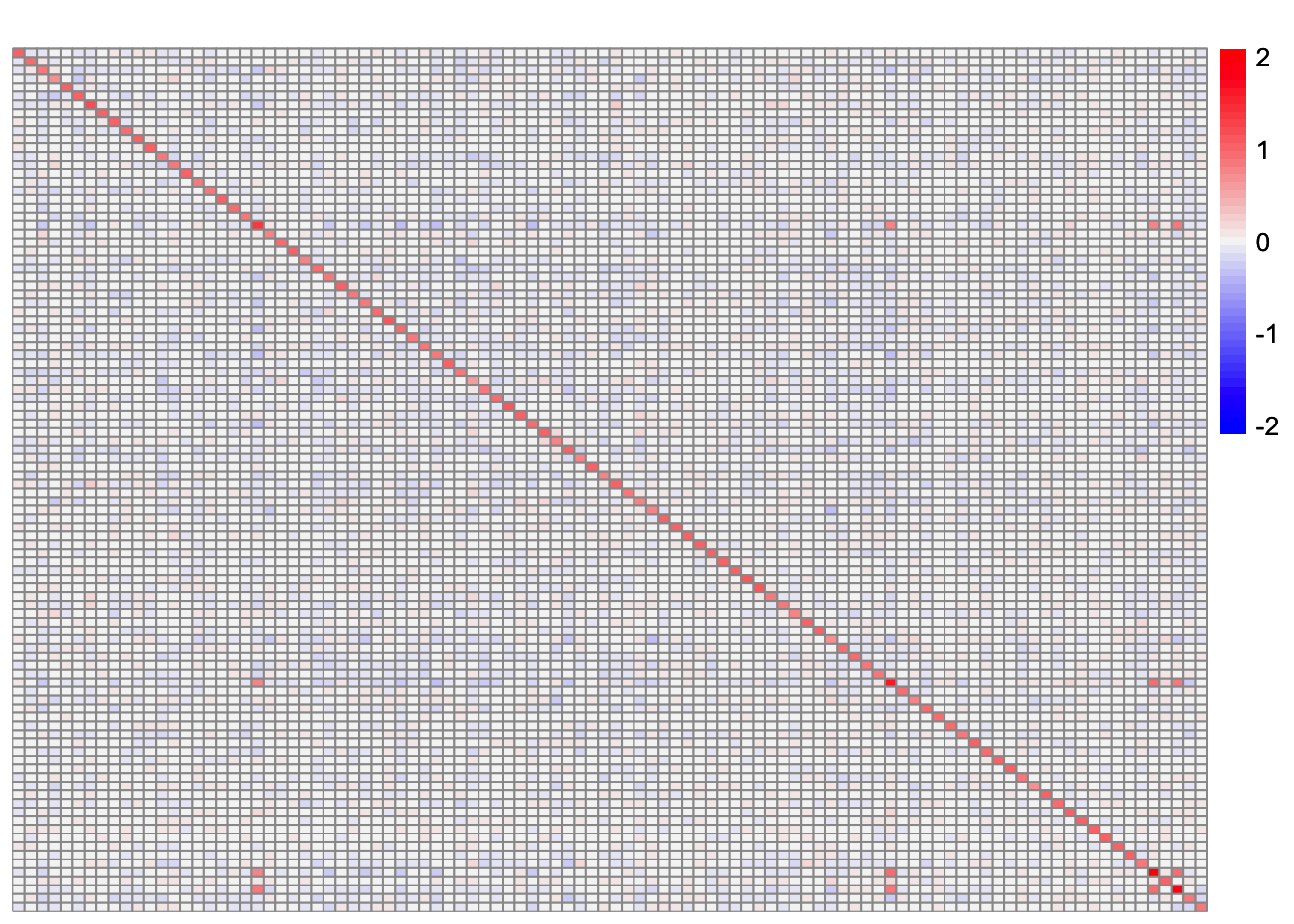

sim_data <- sim_binary_loadings_data(sim_args)This is a heatmap of the scaled Gram matrix:

plot_heatmap(sim_data$YYt, colors_range = c('blue','gray96','red'), brks = seq(-max(abs(sim_data$YYt)), max(abs(sim_data$YYt)), length.out = 50))

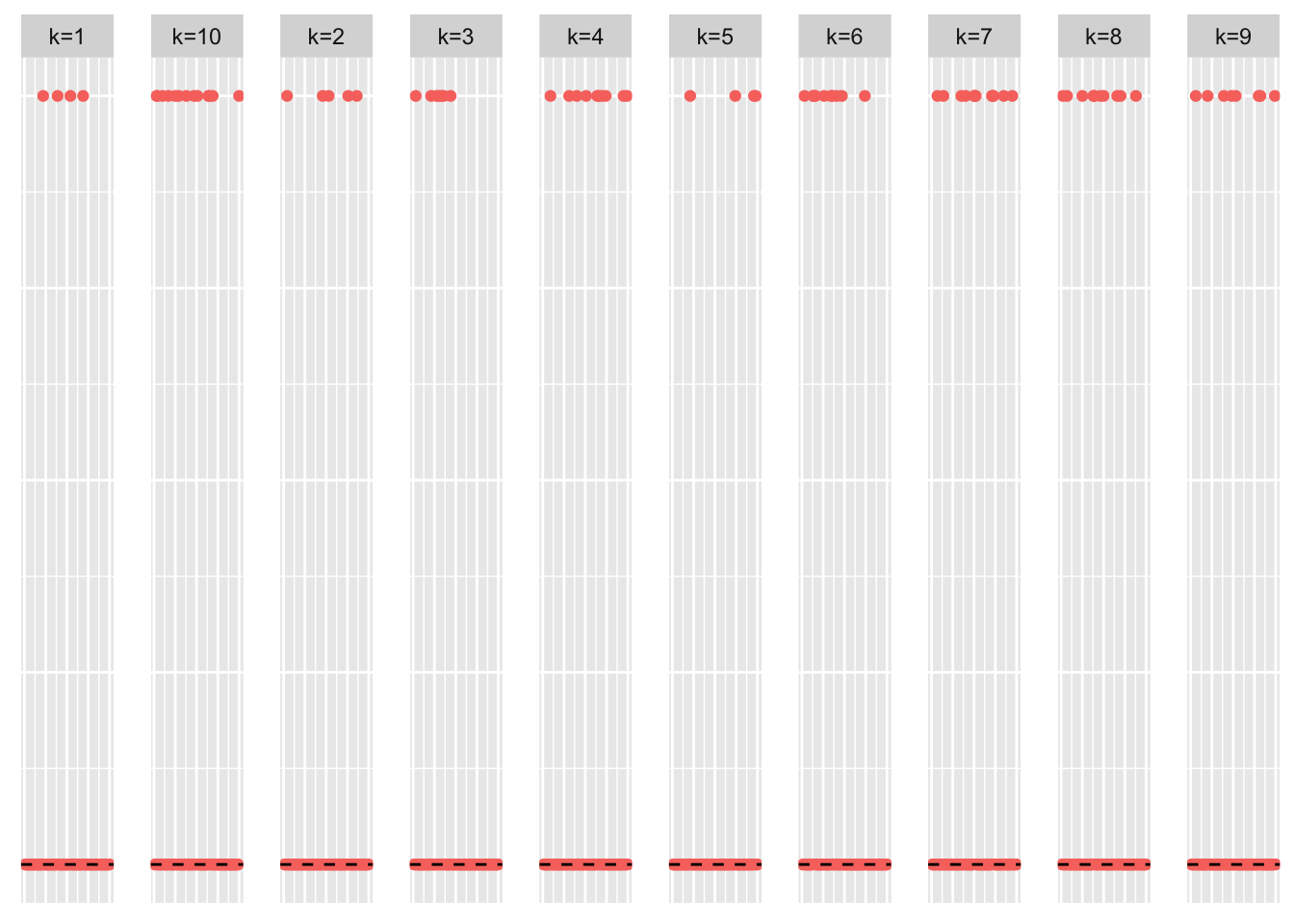

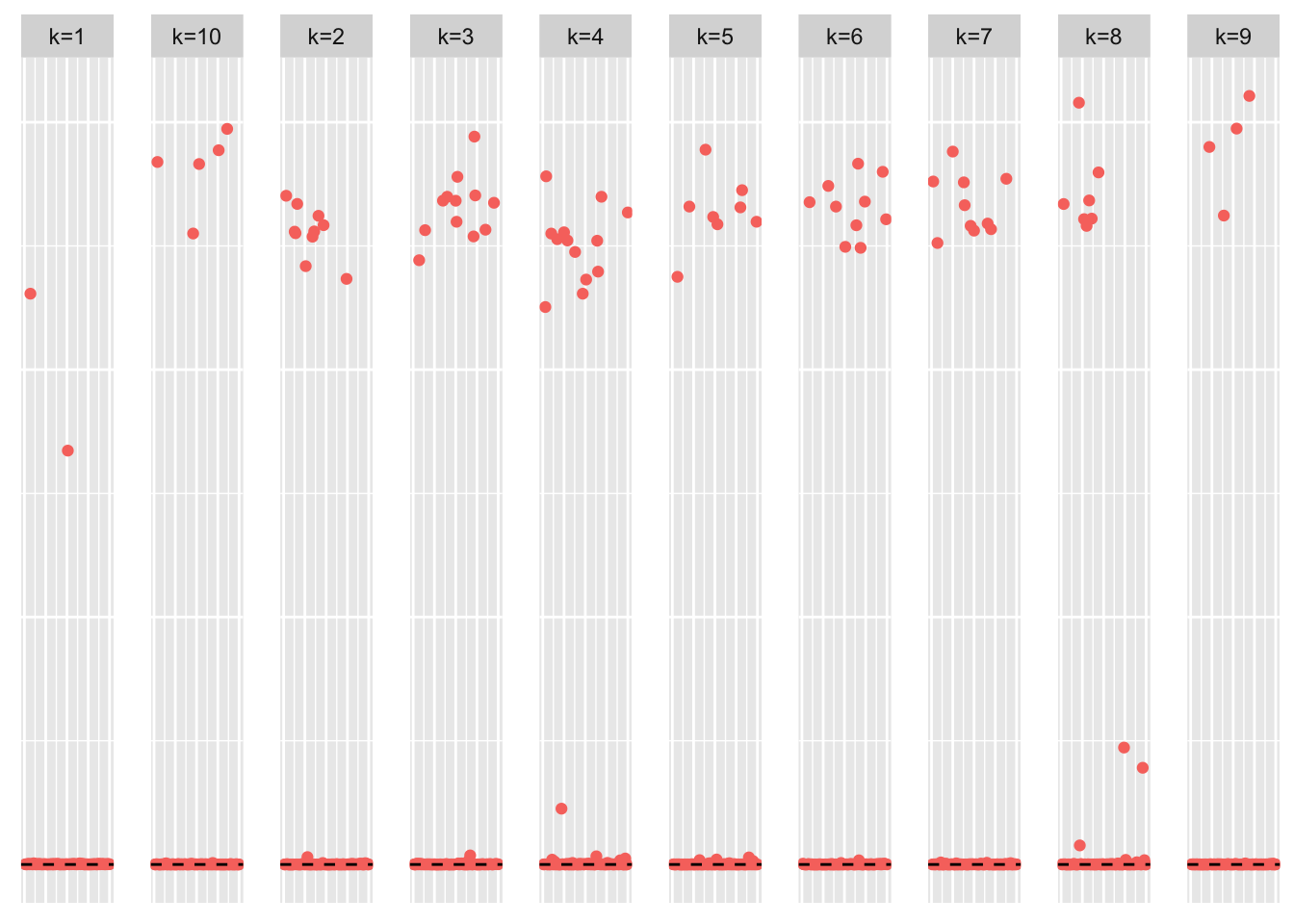

This is a scatter plot of the true loadings matrix:

pop_vec <- rep('A', n)

plot_loadings(sim_data$LL, pop_vec, legendYN = FALSE)

This is a heatmap of the true loadings matrix:

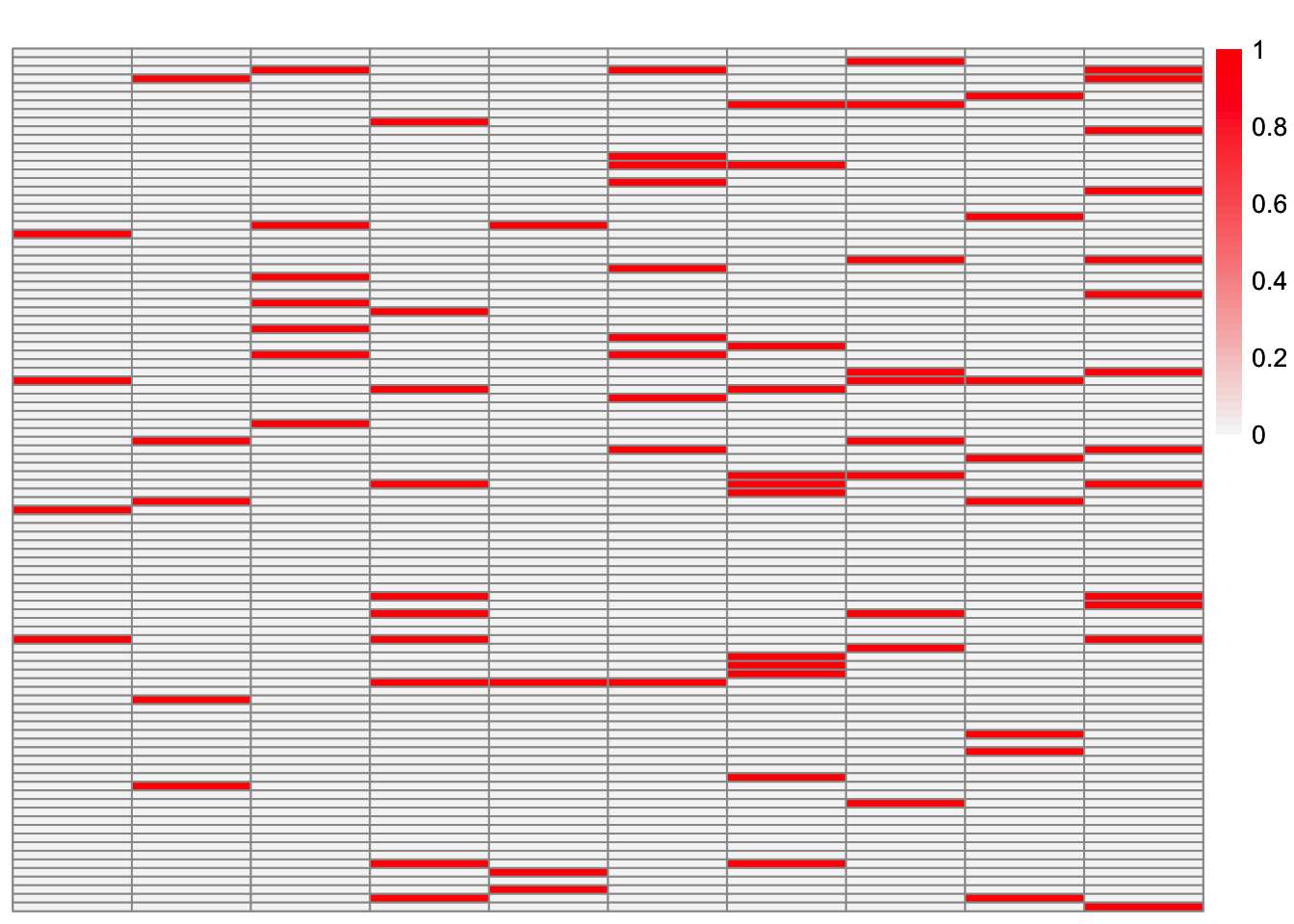

plot_heatmap(sim_data$LL)

Regular symEBcovMF

symebcovmf_overlap_refit_fit <- sym_ebcovmf_fit(S = sim_data$YYt, ebnm_fn = ebnm::ebnm_point_exponential, K = 10, maxiter = 100, rank_one_tol = 10^(-8), tol = 10^(-8), refit_lam = TRUE)This is a scatter plot of \(\hat{L}_{refit}\), the estimate from symEBcovMF:

plot_loadings(symebcovmf_overlap_refit_fit$L_pm %*% diag(sqrt(symebcovmf_overlap_refit_fit$lambda)), pop_vec, legendYN = FALSE)

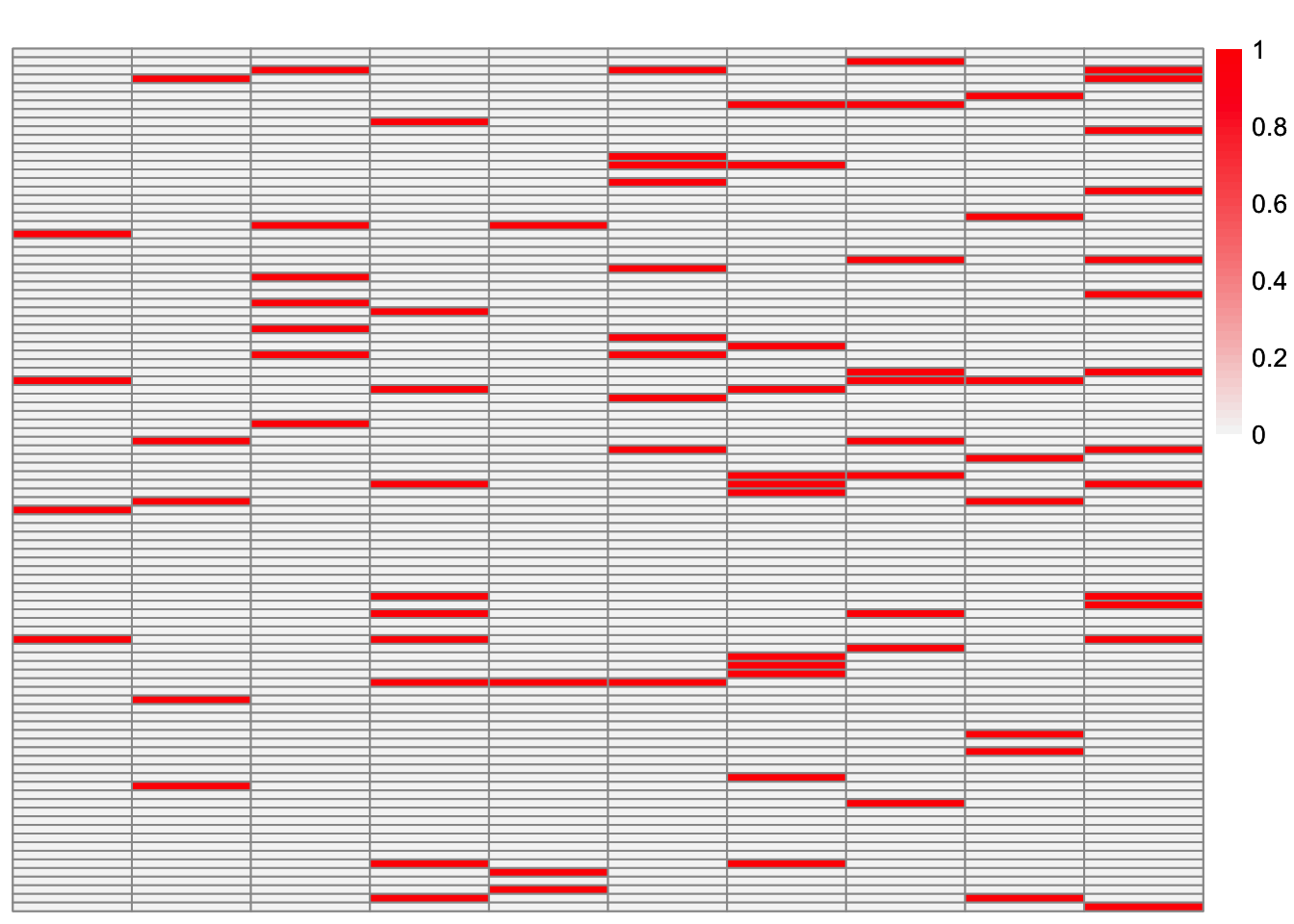

This is a heatmap of the true loadings matrix:

plot_heatmap(sim_data$LL)

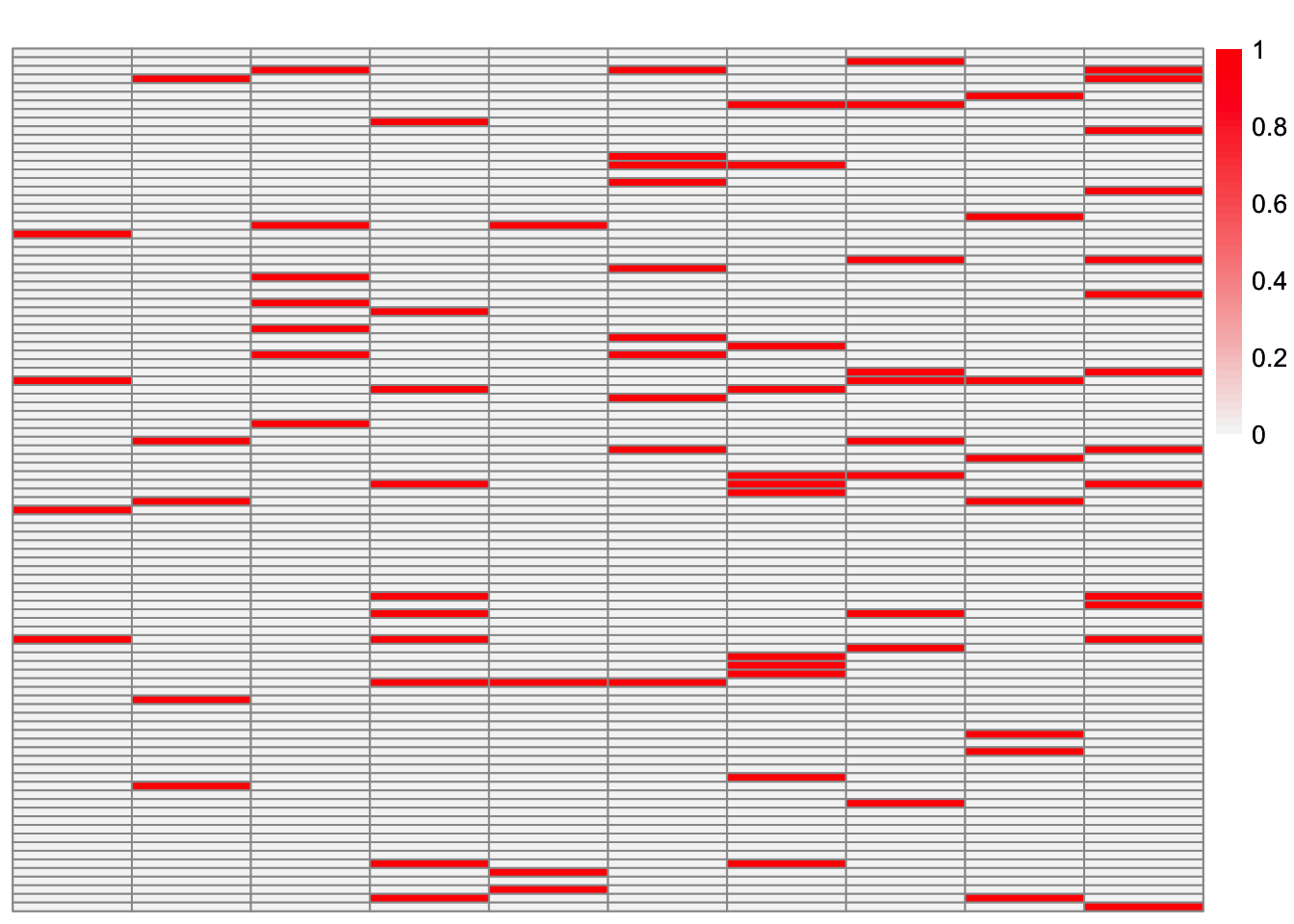

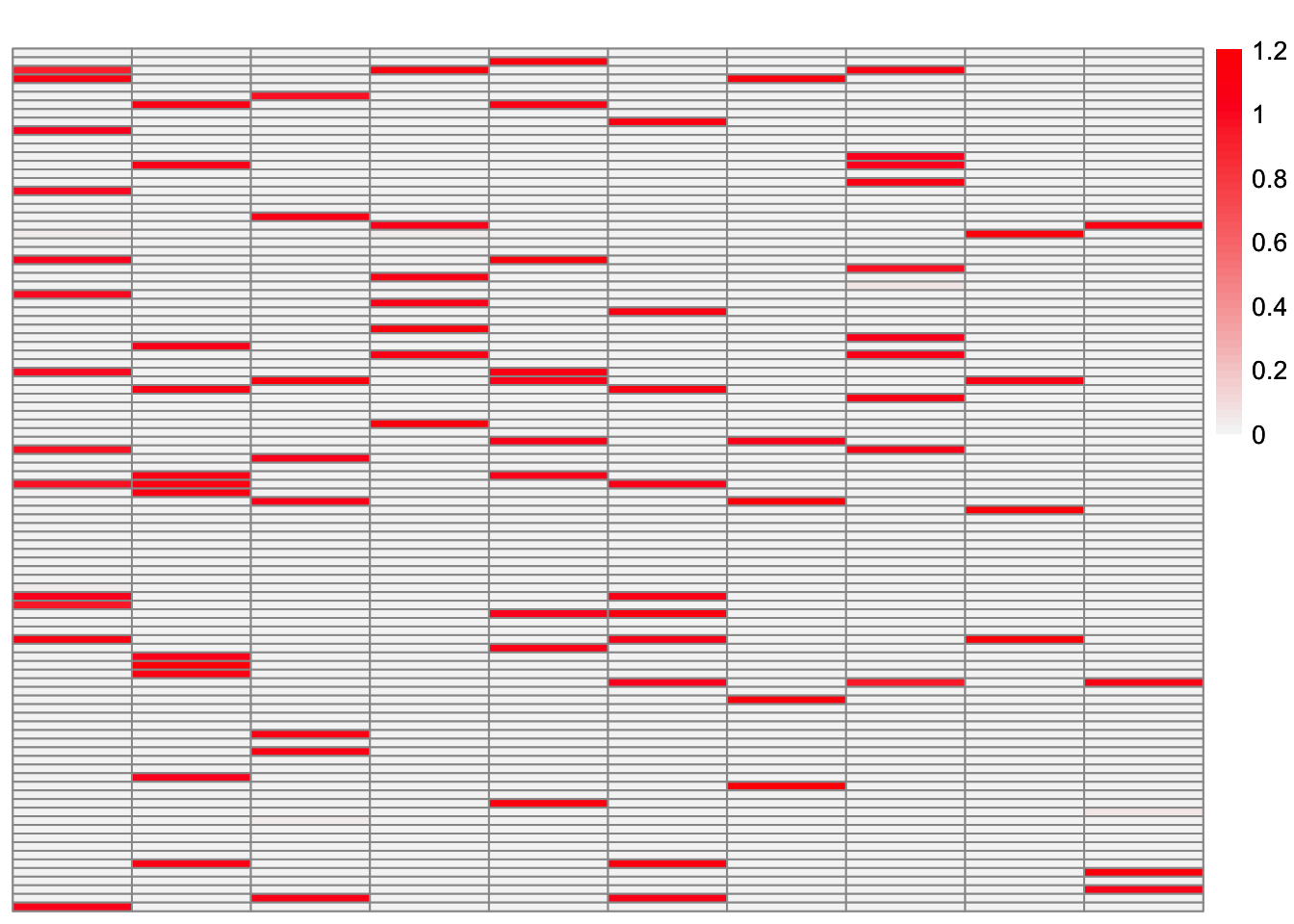

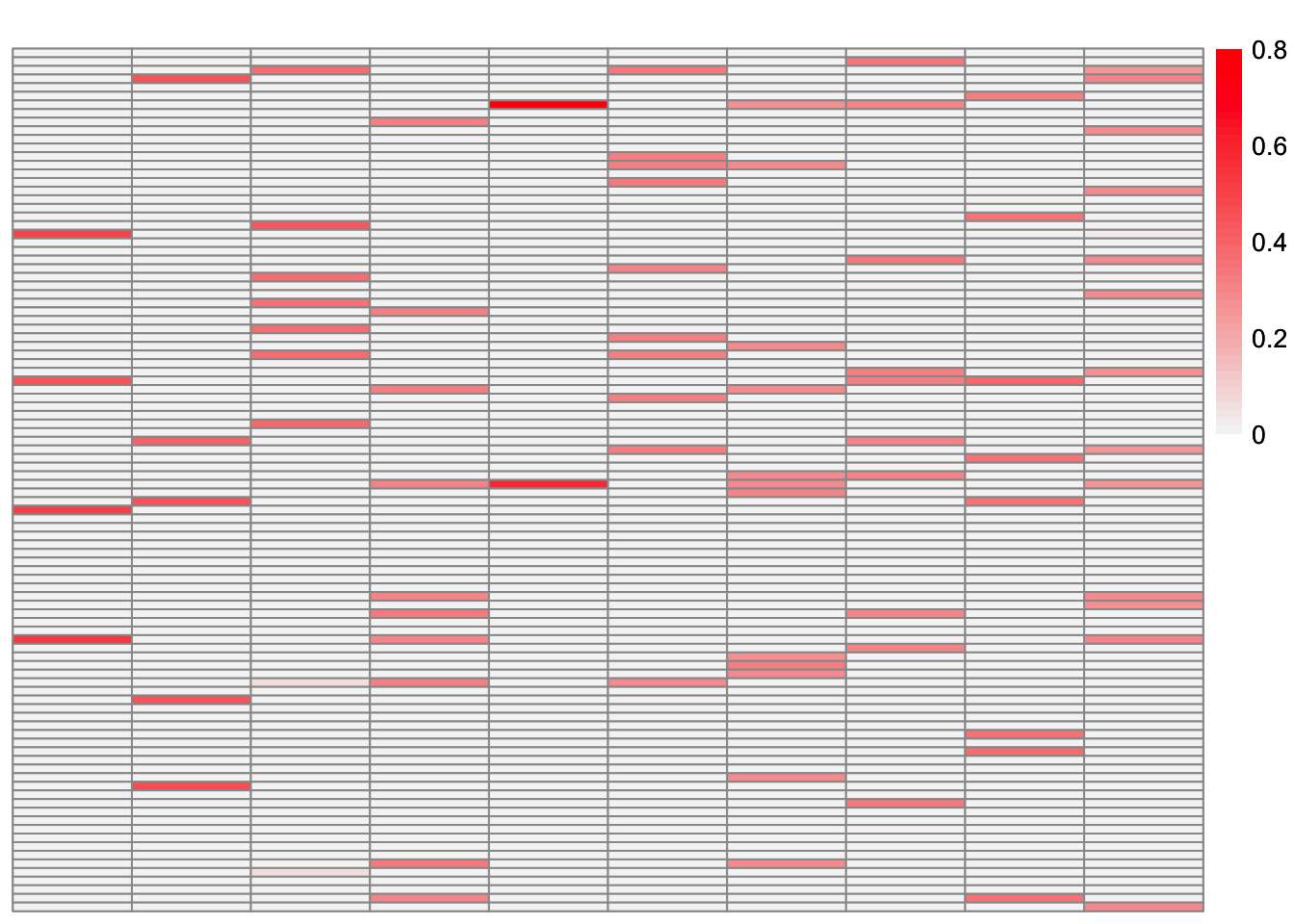

This is a heatmap of \(\hat{L}_{refit}\). The columns have been permuted to match the true loadings matrix:

symebcovmf_overlap_refit_fit_L_permuted <- permute_L(symebcovmf_overlap_refit_fit$L_pm, sim_data$LL)

plot_heatmap(symebcovmf_overlap_refit_fit_L_permuted, brks = seq(0, max(symebcovmf_overlap_refit_fit_L_permuted), length.out = 50))

This is a heatmap of \(\hat{L}_{refit}\) where the columns have not been permuted. The order corresponds to the order in which the factors were added.

plot_heatmap(symebcovmf_overlap_refit_fit$L_pm %*% diag(sqrt(symebcovmf_overlap_refit_fit$lambda)), brks = seq(0, max(symebcovmf_overlap_refit_fit$L_pm %*% diag(sqrt(symebcovmf_overlap_refit_fit$lambda))), length.out = 50))

This is the objective function value attained:

symebcovmf_overlap_refit_fit$elbo[1] 1346.566This is the crossproduct similarity of \(\hat{L}_{refit}\):

compute_crossprod_similarity(symebcovmf_overlap_refit_fit$L_pm, sim_data$LL)[1] 0.8462461Observations

Greedy symEBcovMF does an okay job at recovering the overlapping structure. Some of estimates match the corresponding true factors very well, e.g. factors 1, 2, and 4. However, some estimates are more dense than their corresponding true factors, e.g. factors 5, 6, 9, and 10. There are also a couple of estimates which differ from their corresponding true factors by a couple of samples, e.g. factors 3, 7, and 8.

Looking at the heatmap where the columns are ordered by the order they were added, we see that the earlier factors are more dense, while the later factors are more sparse. The factors added later are the factors which more closely match their corresponding true factors.

For comparison, EBCD

For comparison, I try running greedy EBCD on the data.

library(ebcd)ebcd_init_obj <- ebcd_init(X = t(sim_data$Y))

greedy_ebcd_fit <- ebcd_greedy(ebcd_init_obj, Kmax = 10, ebnm_fn = ebnm::ebnm_point_exponential)This is a heatmap of the estimate from greedy EBCD, \(\hat{L}_{ebcd-greedy}\):

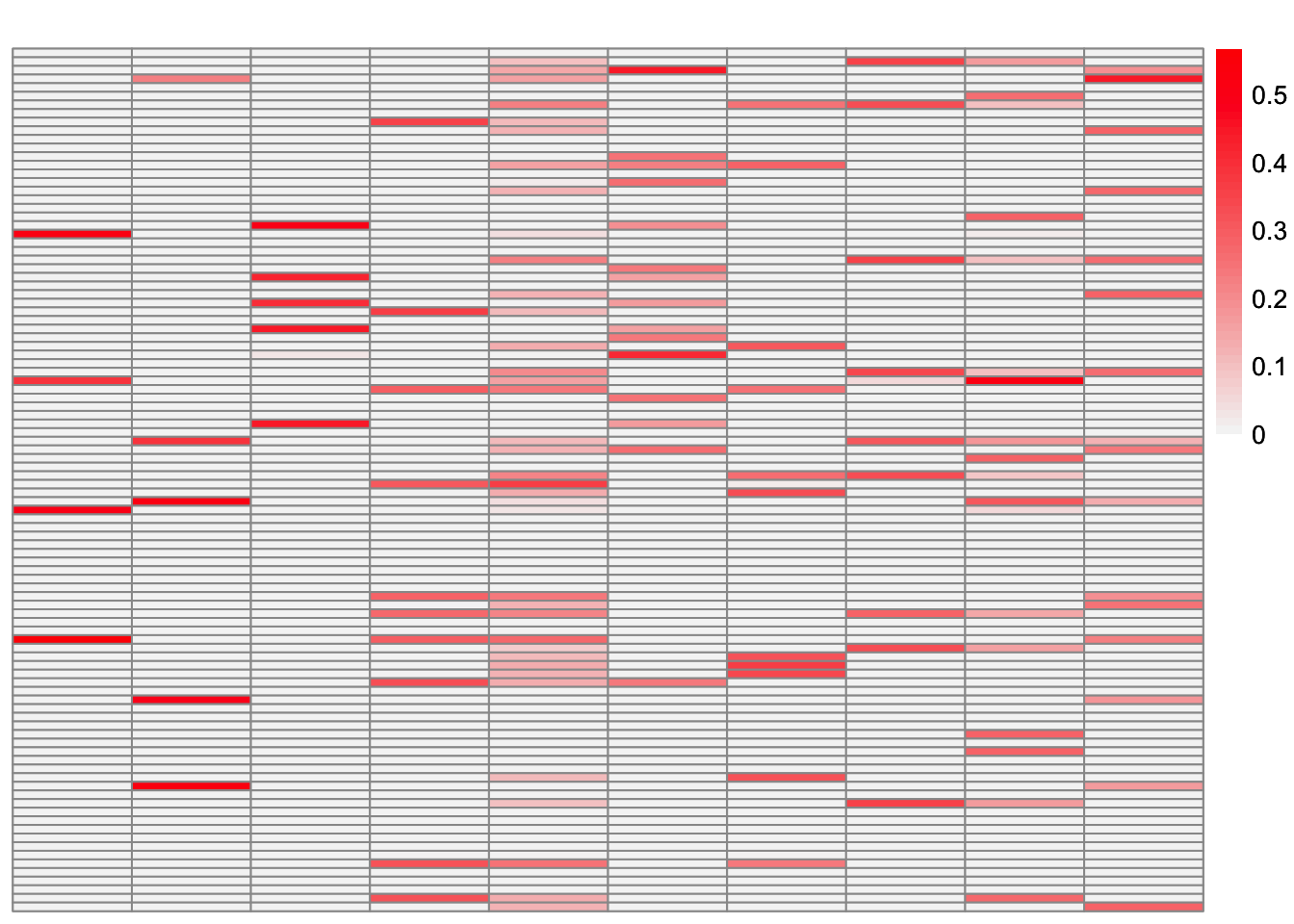

plot_heatmap(greedy_ebcd_fit$EL, brks = seq(0, max(greedy_ebcd_fit$EL), length.out = 50))

This is the crossproduct similarity of \(\hat{L}_{ebcd-greedy}\):

compute_crossprod_similarity(greedy_ebcd_fit$EL, sim_data$LL)[1] 0.852768Now, we run EBCD’s backfit method.

ebcd_backfit_fit <- ebcd_backfit(greedy_ebcd_fit)This is a heatmap of the estimate from EBCD with backfit, \(\hat{L}_{ebcd-backfit}\):

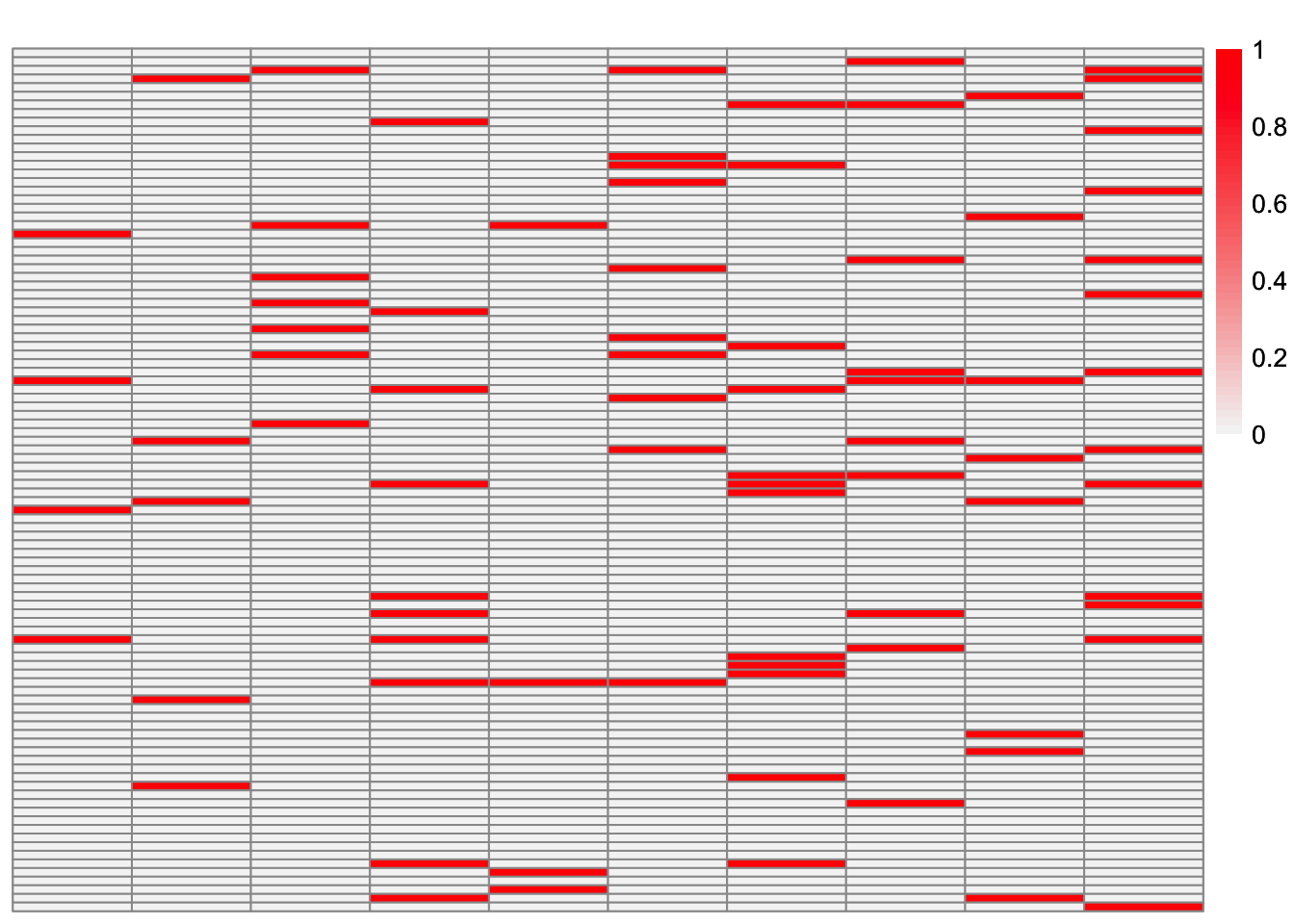

plot_heatmap(ebcd_backfit_fit$EL, brks = seq(0, max(ebcd_backfit_fit$EL), length.out = 50))

This is a heatmap of the true loadings matrix:

plot_heatmap(sim_data$LL)

This is a heatmap of \(\hat{L}_{ebcd-backfit}\). The columns have been permuted to best match the true loadings matrix.

ebcd_backfit_fit_L_permuted <- permute_L(ebcd_backfit_fit$EL, sim_data$LL)

plot_heatmap(ebcd_backfit_fit_L_permuted, brks = seq(0, max(ebcd_backfit_fit_L_permuted), length.out = 50))

This is the crossproduct similarity of \(\hat{L}_{ebcd-backfit}\):

compute_crossprod_similarity(ebcd_backfit_fit$EL, sim_data$LL)[1] 0.9990405We see that the greedy EBCD method performs comparably to greedy symEBcovMF. Therefore, part of the reason EBCD performs so well in this setting is its backfitting. So I suspect that backfitting will help us obtain a better estimate.

symEBcovMF with Backfit

Now, we try backfitting (also with a point-exponential prior):

symebcovmf_fit_backfit <- sym_ebcovmf_backfit(sim_data$YYt, symebcovmf_overlap_refit_fit, ebnm_fn = ebnm::ebnm_point_exponential, backfit_maxiter = 100)[1] "elbo decreased by 7.27595761418343e-12"

[1] "elbo decreased by 1.09139364212751e-11"

[1] "elbo decreased by 8.18545231595635e-12"

[1] "elbo decreased by 1.2732925824821e-11"

[1] "elbo decreased by 1.81898940354586e-12"

[1] "elbo decreased by 1.04591890703887e-11"

[1] "elbo decreased by 6.3664629124105e-12"

[1] "elbo decreased by 1.36424205265939e-11"

[1] "elbo decreased by 2.27373675443232e-12"

[1] "elbo decreased by 2.72848410531878e-12"

[1] "elbo decreased by 4.54747350886464e-12"

[1] "elbo decreased by 1.40971678774804e-11"

[1] "elbo decreased by 1.36424205265939e-12"

[1] "elbo decreased by 4.09272615797818e-12"

[1] "elbo decreased by 5.45696821063757e-12"

[1] "elbo decreased by 1.31876731757075e-11"

[1] "elbo decreased by 3.18323145620525e-12"

[1] "elbo decreased by 8.18545231595635e-12"

[1] "elbo decreased by 3.18323145620525e-12"

[1] "elbo decreased by 8.18545231595635e-12"

[1] "elbo decreased by 4.54747350886464e-13"

[1] "elbo decreased by 1.40971678774804e-11"

[1] "elbo decreased by 2.00088834390044e-11"

[1] "elbo decreased by 9.09494701772928e-13"

[1] "elbo decreased by 3.63797880709171e-12"

[1] "elbo decreased by 9.09494701772928e-13"

[1] "elbo decreased by 5.0022208597511e-12"

[1] "elbo decreased by 9.09494701772928e-13"

[1] "elbo decreased by 4.54747350886464e-13"

[1] "elbo decreased by 8.18545231595635e-12"

[1] "elbo decreased by 1.81898940354586e-12"

[1] "elbo decreased by 9.09494701772928e-13"

[1] "elbo decreased by 1.45519152283669e-11"

[1] "elbo decreased by 1.68256519827992e-11"

[1] "elbo decreased by 1.50066625792533e-11"

[1] "elbo decreased by 5.0022208597511e-12"

[1] "elbo decreased by 1.81898940354586e-12"

[1] "elbo decreased by 1.36424205265939e-12"

[1] "elbo decreased by 1.00044417195022e-11"

[1] "elbo decreased by 1.13686837721616e-11"

[1] "elbo decreased by 5.45696821063757e-12"

[1] "elbo decreased by 3.63797880709171e-12"

[1] "elbo decreased by 4.54747350886464e-13"

[1] "elbo decreased by 2.27373675443232e-12"

[1] "elbo decreased by 1.36424205265939e-11"

[1] "elbo decreased by 5.91171556152403e-12"

[1] "elbo decreased by 6.3664629124105e-12"

[1] "elbo decreased by 2.00088834390044e-11"

[1] "elbo decreased by 4.09272615797818e-12"

[1] "elbo decreased by 1.18234311230481e-11"

[1] "elbo decreased by 1.54614099301398e-11"

[1] "elbo decreased by 1.81898940354586e-12"

[1] "elbo decreased by 2.72848410531878e-12"

[1] "elbo decreased by 4.54747350886464e-13"

[1] "elbo decreased by 4.54747350886464e-12"

[1] "elbo decreased by 7.27595761418343e-12"

[1] "elbo decreased by 1.36424205265939e-12"

[1] "elbo decreased by 2.72848410531878e-12"

[1] "elbo decreased by 2.27373675443232e-12"

[1] "elbo decreased by 2.27373675443232e-12"

[1] "elbo decreased by 4.54747350886464e-13"

[1] "elbo decreased by 5.45696821063757e-12"This is a scatter plot of \(\hat{L}_{backfit}\), the estimate from symEBcovMF:

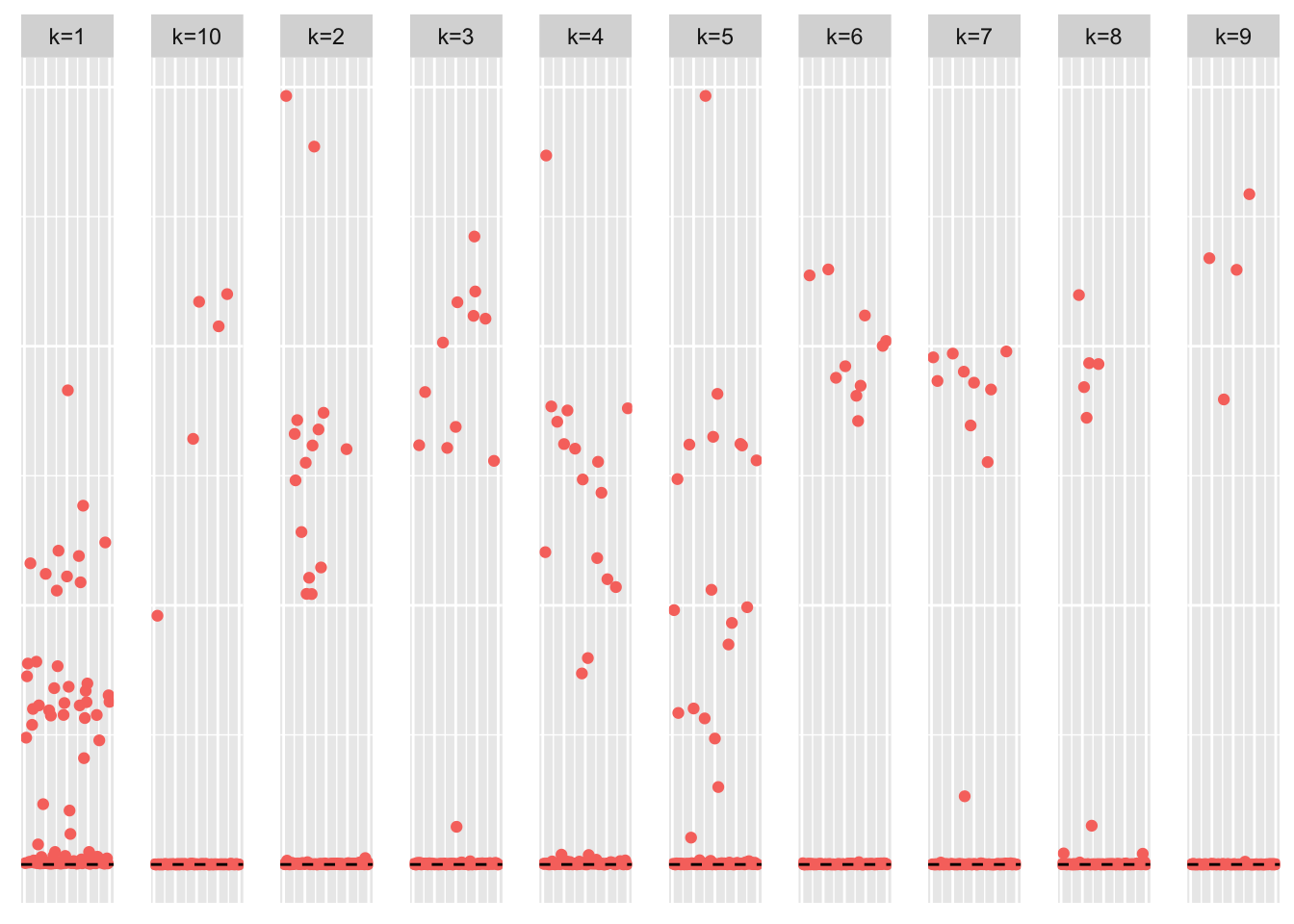

plot_loadings(symebcovmf_fit_backfit$L_pm %*% diag(sqrt(symebcovmf_fit_backfit$lambda)), pop_vec, legendYN = FALSE)

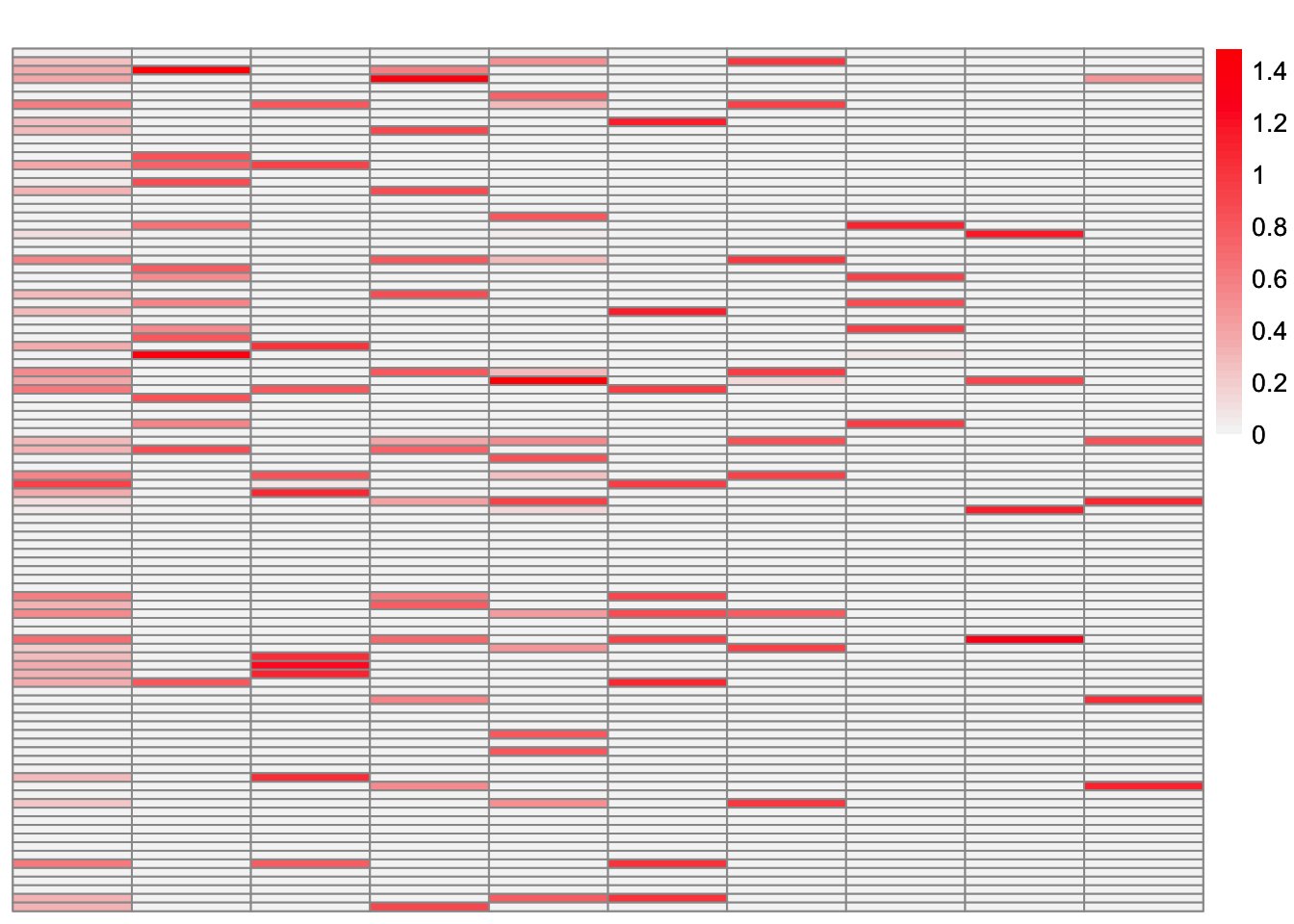

This is a heatmap of the true loadings matrix:

plot_heatmap(sim_data$LL)

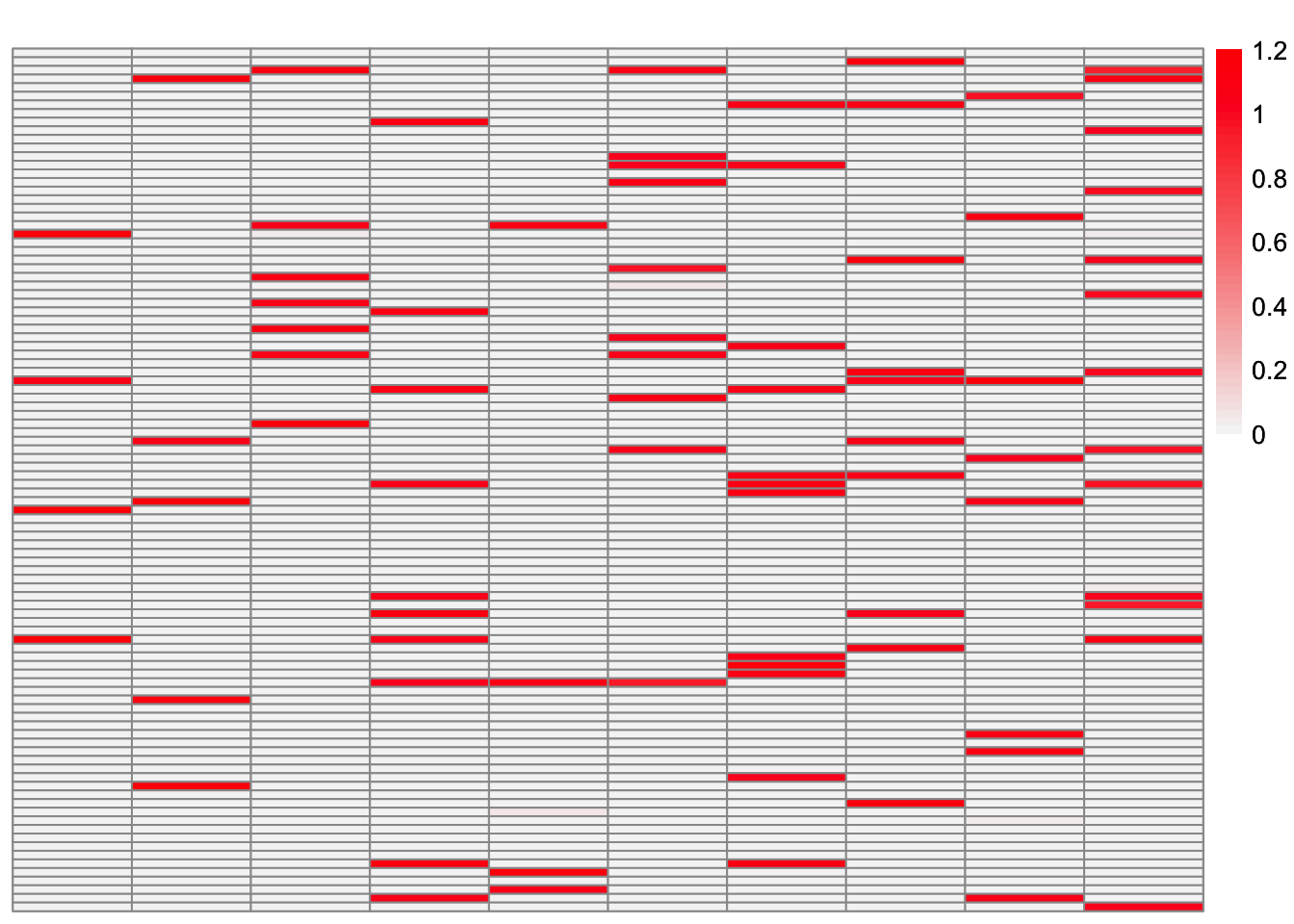

This is a heatmap of \(\hat{L}_{backfit}\). The columns have been permuted to match the true loadings matrix:

symebcovmf_fit_backfit_L_permuted <- permute_L(symebcovmf_fit_backfit$L_pm, sim_data$LL)

plot_heatmap(symebcovmf_fit_backfit_L_permuted, brks = seq(0, max(symebcovmf_fit_backfit_L_permuted), length.out = 50))

This is the objective function value attained:

symebcovmf_fit_backfit$elbo[1] 3019.79This is the crossproduct similarity of \(\hat{L}_{backfit}\):

compute_crossprod_similarity(symebcovmf_fit_backfit$L_pm, sim_data$LL)[1] 0.8984952Observations

Visually, the estimate after the backfitting appears to better match the true loadings matrix. This is corroborated by the increased cross-product similarity. For many of the factors, the estimates are very close to the true values. However, the estimate for factor 5 is noticeably off. After further inspection, we see that the best estimate for factor 3 captures the shared effects from the true factor 5 along with the shared effects from the true factor 3.

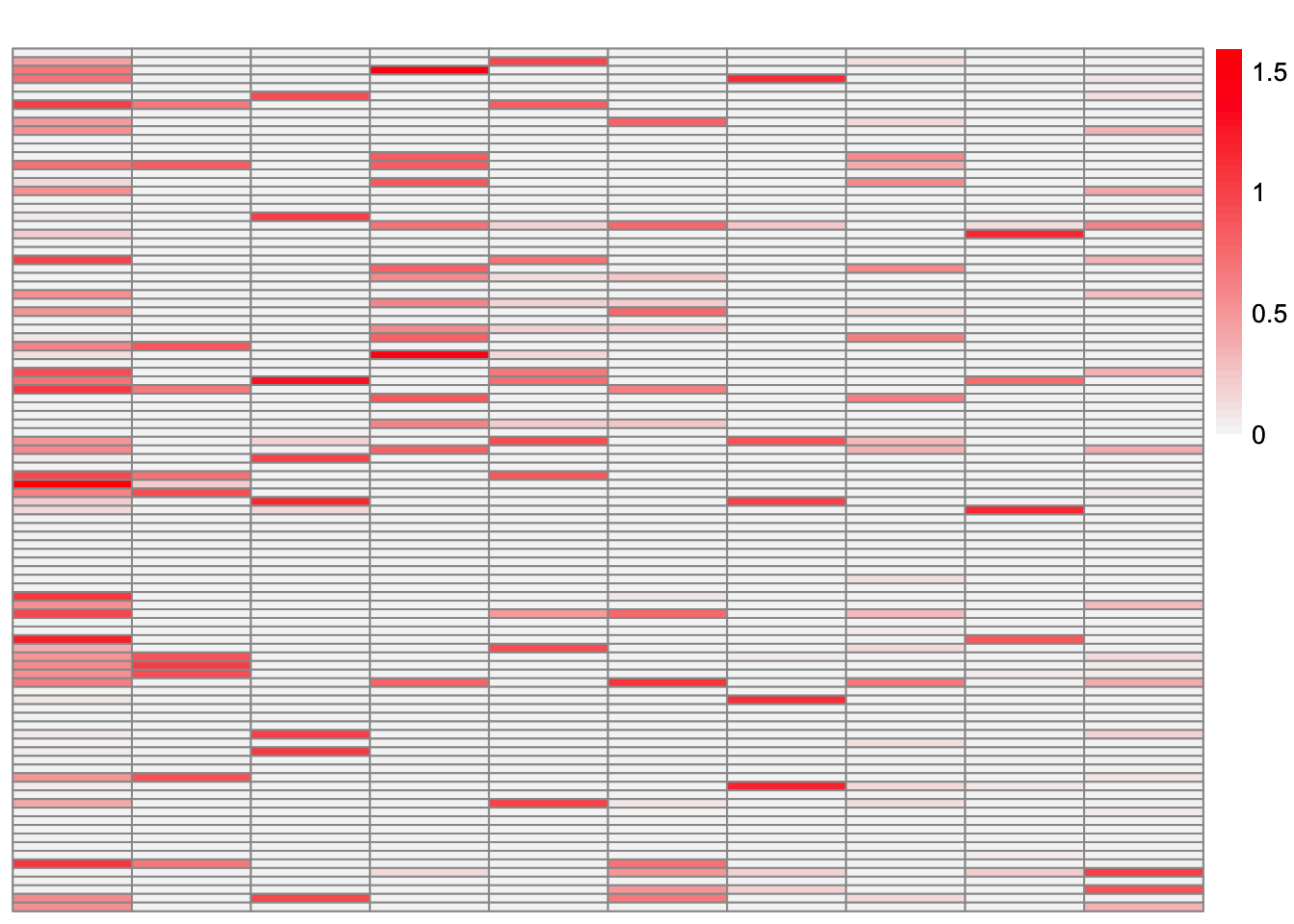

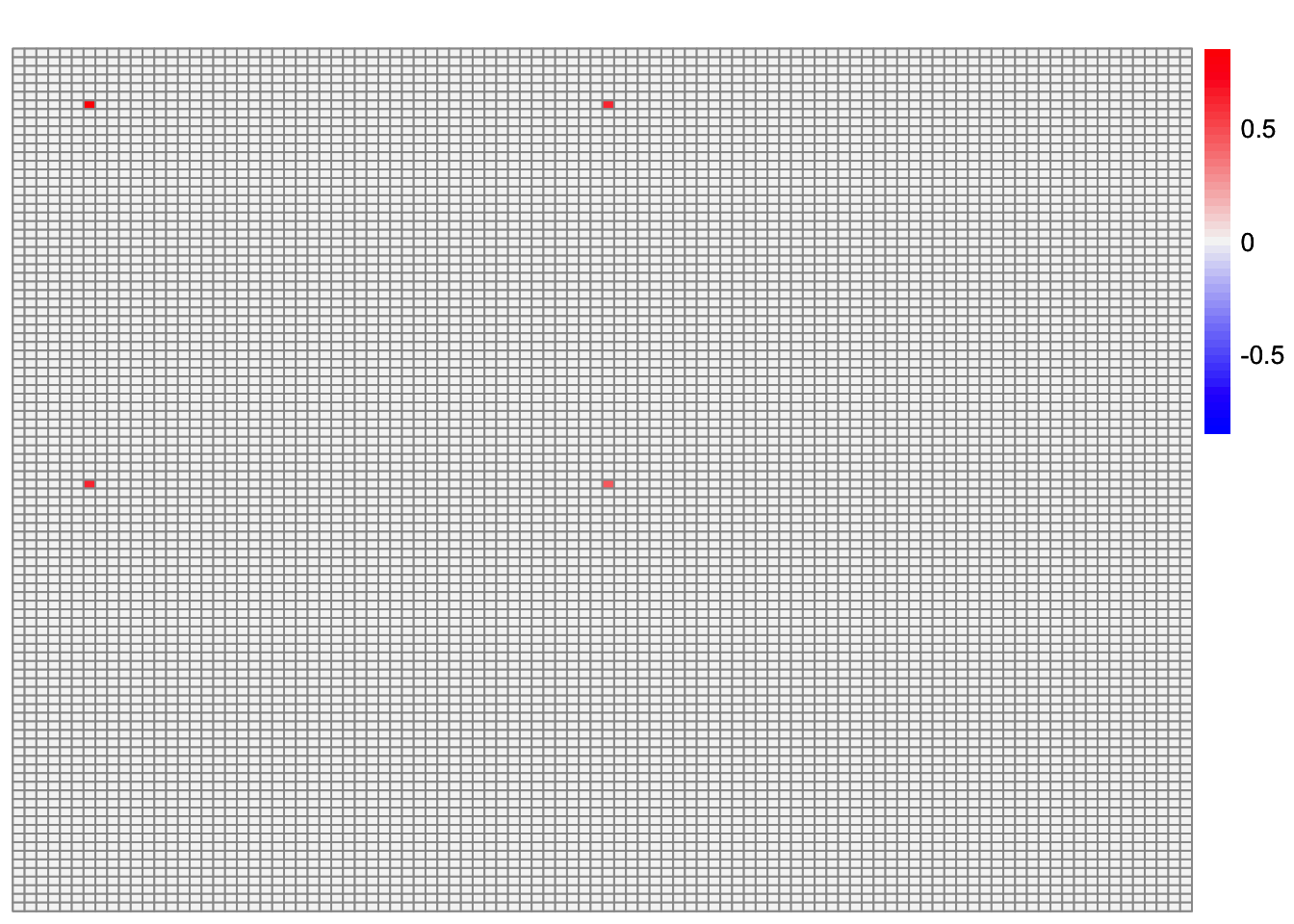

Here, I look at the residual matrix minus the estimate for factor 5 (the two-individual factor):

R <- sim_data$YYt - tcrossprod(symebcovmf_fit_backfit$L_pm[,c(2:10)] %*% diag(sqrt(symebcovmf_fit_backfit$lambda[2:10])))plot_heatmap(R, colors_range = c('blue','gray96','red'), brks = seq(-max(abs(R)), max(abs(R)), length.out = 50))

This is the component generated from the estimate of factor 5:

component_fac5 <- tcrossprod(sqrt(symebcovmf_fit_backfit$lambda[1])*symebcovmf_fit_backfit$L_pm[,1])plot_heatmap(component_fac5, colors_range = c('blue','gray96','red'), brks = seq(-max(abs(component_fac5)), max(abs(component_fac5)), length.out = 50))

It doesn’t make sense to me why this component would be chosen given the residual matrix.

sessionInfo()R version 4.3.2 (2023-10-31)

Platform: aarch64-apple-darwin20 (64-bit)

Running under: macOS 15.4.1

Matrix products: default

BLAS: /Library/Frameworks/R.framework/Versions/4.3-arm64/Resources/lib/libRblas.0.dylib

LAPACK: /Library/Frameworks/R.framework/Versions/4.3-arm64/Resources/lib/libRlapack.dylib; LAPACK version 3.11.0

locale:

[1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

time zone: America/Chicago

tzcode source: internal

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] ebcd_0.0.0.9000 lpSolve_5.6.20 ggplot2_3.5.1 pheatmap_1.0.12

[5] ebnm_1.1-34 workflowr_1.7.1

loaded via a namespace (and not attached):

[1] gtable_0.3.5 xfun_0.48 bslib_0.8.0 processx_3.8.4

[5] lattice_0.22-6 callr_3.7.6 vctrs_0.6.5 tools_4.3.2

[9] ps_1.7.7 generics_0.1.3 tibble_3.2.1 fansi_1.0.6

[13] highr_0.11 pkgconfig_2.0.3 Matrix_1.6-5 SQUAREM_2021.1

[17] RColorBrewer_1.1-3 lifecycle_1.0.4 truncnorm_1.0-9 farver_2.1.2

[21] compiler_4.3.2 stringr_1.5.1 git2r_0.33.0 munsell_0.5.1

[25] getPass_0.2-4 httpuv_1.6.15 htmltools_0.5.8.1 sass_0.4.9

[29] yaml_2.3.10 later_1.3.2 pillar_1.9.0 jquerylib_0.1.4

[33] whisker_0.4.1 cachem_1.1.0 trust_0.1-8 RSpectra_0.16-2

[37] tidyselect_1.2.1 digest_0.6.37 stringi_1.8.4 dplyr_1.1.4

[41] ashr_2.2-66 labeling_0.4.3 splines_4.3.2 rprojroot_2.0.4

[45] fastmap_1.2.0 grid_4.3.2 colorspace_2.1-1 cli_3.6.3

[49] invgamma_1.1 magrittr_2.0.3 utf8_1.2.4 withr_3.0.1

[53] scales_1.3.0 promises_1.3.0 horseshoe_0.2.0 rmarkdown_2.28

[57] httr_1.4.7 deconvolveR_1.2-1 evaluate_1.0.0 knitr_1.48

[61] irlba_2.3.5.1 rlang_1.1.4 Rcpp_1.0.13 mixsqp_0.3-54

[65] glue_1.8.0 rstudioapi_0.16.0 jsonlite_1.8.9 R6_2.5.1

[69] fs_1.6.4